Globally, 285 million people have visual impairment, with one-fifth living in India. India alone is home to 4.80 million blind individuals and 4.69 million people with severe visual impairment. These numbers highlight the need for innovative solutions like object detection glasses that can reshape how visually impaired individuals direct their surroundings.

Traditional navigation aids have limitations, but recent advancements in AI smart glasses are altering the map. AI object detection technology creates wearable devices that provide live environmental awareness. Recent research shows these systems use components like Raspberry Pi, cameras, and ultrasonic sensors to recognize obstacles and identify pre-registered individuals. Some devices can detect obstacles from up to 150cm away with a 60-degree angular range, making them effective for daily navigation challenges.

This piece explores how these AI-powered assistive technologies make navigation safer and more intuitive for visually impaired individuals, especially in countries like India where approximately 1% of the population is blind and about 4-5% experience some form of visual impairment.

Navigating the physical environment presents huge challenges for visually impaired people worldwide, far beyond what sighted individuals might imagine. The World Health Organization (WHO) reports that out of the 253 million people with visual impairments globally, 36 million are classified as blind, and 217 million experience severe visual impairment. Nearly 90% of these cases are concentrated in developing countries, highlighting the urgent need for available solutions.

Secure and independent mobility remains one of the most significant obstacles for visually impaired individuals. Daily activities that sighted people take for granted become complex challenges requiring careful planning and assistance. The fundamental need to navigate urban environments independently becomes very difficult without sight.

Navigation challenges extend far beyond simple movement from one point to another. People with visual impairments must develop sophisticated techniques to familiarize themselves with new environments, orient themselves within unfamiliar spaces, and move safely between points of interest. This process requires significant mental mapping abilities and constant awareness of potential hazards.

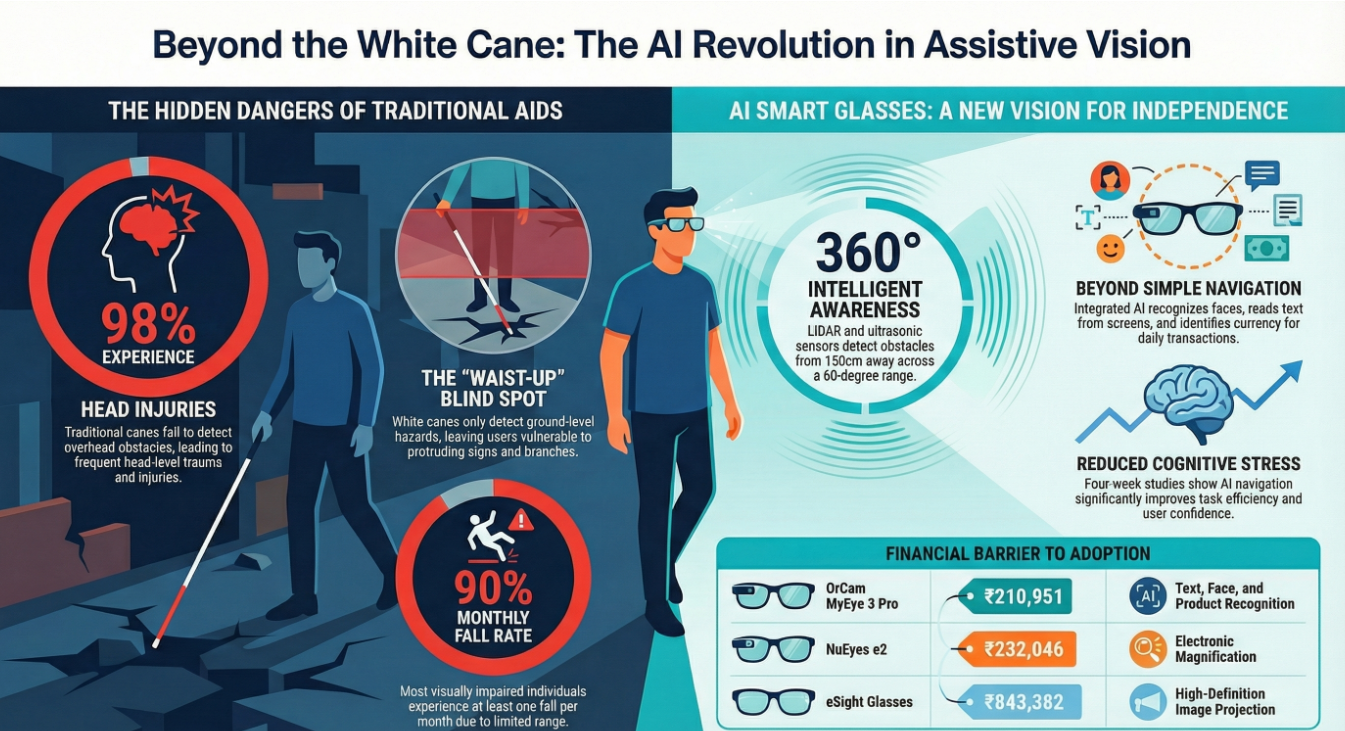

One critical aspect often overlooked is the danger posed by overhead obstacles. Traditional navigation methods fail to detect objects above waist level, creating significant safety risks. A study conducted at the University of Santa Cruz with 300 legally blind and completely blind individuals revealed a startling statistic: 98% of those interviewed had experienced one or more head-related injuries. More concerning still, 23% of these injuries required medical attention, including stitches or dental treatment.

The physical dangers are just one dimension of the challenge. These accidents also cause substantial psychological harm, with 26% of head-related injuries resulting in the person's loss of confidence as an independent traveler. This erosion of self-assurance creates a devastating cycle: fear of injury leads to reduced independence, which further diminishes quality of life.

Another major threat is falling, which represents one of the largest fears among visually impaired individuals. The same University of Santa Cruz study found that approximately 90% of all participants experienced some type of fall at least once a month. Even more alarming, about 36% of these falls resulted in injuries requiring medical attention, with many victims needing stitches, orthopedic surgery, or rehabilitation.

Beyond physical safety concerns, visually impaired individuals face numerous other navigational challenges:

- Limited spatial awareness: Without visual cues, understanding the layout of an environment becomes very difficult.

- Environmental unpredictability: Construction zones, temporary obstacles, and crowded areas present constantly changing hazards.

- Inaccessible information: Street signs, building directories, and other navigational information are often not available in accessible formats.

- Social barriers: The need to frequently ask for assistance can be emotionally taxing and sometimes met with discomfort or impatience.

So, many visually impaired people limit their travel to a few familiar locations, significantly restricting their independence and participation in society. This limitation affects everything from employment opportunities to social interactions, creating a substantial effect on overall quality of life.

The economic burden in the United States due to vision loss was calculated at 27.5 billion US dollars in 2012 alone. This figure includes both direct medical costs and indirect costs such as lost productivity, highlighting the enormous societal effect of visual impairment.

The white cane has long been the primary mobility tool for visually impaired individuals. While effective in many situations, this traditional aid has significant limitations that leave users vulnerable to various hazards.

The most critical shortcoming is the cane's inability to detect obstacles above knee or waist level. Since the cane only makes contact with the ground and low-lying objects, it provides no warning about overhanging hazards like tree branches, protruding signs, or open cabinet doors. This limitation directly contributes to the high rate of head injuries mentioned previously.

Furthermore, the white cane has a very limited detection range—typically extending only to the length of the cane itself. This short range gives users precious little time to react to their surroundings, increasing the risk of tripping or falling. For someone navigating a busy urban environment, this minimal warning distance can make the difference between safe passage and injury.

Another significant limitation is the cane's ineffectiveness on certain surfaces. White canes become much less reliable on sand, snow, or uneven terrain, precisely the conditions where additional guidance would be most valuable. Similarly, they struggle to detect sudden changes in elevation like steps or curbs unless direct contact is made, creating falling hazards.

The white cane also presents social challenges. As noted in research, obstacles detected by white canes are sometimes other people, creating potentially uncomfortable interactions. Additionally, white canes cannot identify specific objects like tables or chairs, or determine whether a seat is already occupied, limiting a user's ability to navigate social environments effectively.

Performance limitations of the white cane become even more apparent in comparative studies. Research shows that walking speed with electronic aids is slower compared to the traditional white cane. While this might initially seem like a disadvantage for electronic aids, it actually reflects a more cautious and informed navigation process that reduces accidents.

Guide dogs, another traditional assistive option, also have limitations. While highly effective when properly trained, guide dogs are expensive, require extensive training periods, and demand ongoing care that can be challenging for some visually impaired individuals. Not every visually impaired person can afford or manage the responsibilities of a guide dog, making this solution unavailable to many.

The abandon rate for electronic assistive devices is estimated to be about 75%, highlighting the difficulty in creating solutions that truly meet users' needs. This high rejection rate underscores how challenging it is to develop technology that offers significant advantages over traditional aids while remaining intuitive and reliable enough for daily use.

Most critically, traditional navigation aids generally cannot provide the kind of rich environmental information that sighted people use constantly. A white cane cannot identify colors, read text, recognize faces, or provide information about the overall layout of a space. This information gap significantly affects a visually impaired person's ability to interact fully with their environment.

To name just one example, visually impaired people face serious difficulties recognizing currency due to the similarity in paper texture and size among various denominations. In countries like Bangladesh, the similar colors between different note values (such as 50 BDT and 200 BDT) make transactions very challenging for those with low vision. Similar challenges arise when trying to identify staircases, washrooms, chairs, tables, or recognize people.

As a result of these limitations, visually impaired individuals often must rely on sighted assistance for many activities, reducing their independence and sometimes causing emotional distress or discomfort. Many individuals prefer not to ask for help due to personal priorities, cultural backgrounds, or embarrassment, making technological solutions that reduce dependency particularly valuable.

The limitations of traditional assistive tools have created a clear need for more advanced solutions. In the last decade, artificial intelligence (AI) and computer vision technologies have emerged as game-changers in developing new assistive devices that address these shortcomings.

The integration of AI into wearable devices represents a significant leap forward in assistive technology. Unlike traditional aids that provide simple obstacle detection through physical contact, AI-powered devices can analyze complex visual information and convert it into useful feedback for visually impaired users. This change from simple physical detection to intelligent environmental understanding marks a revolutionary advancement in assistive technology.

Wearable AI devices come in various forms, with smart glasses emerging as one of the most promising formats. These devices typically incorporate cameras, sensors, and processing capabilities to capture information about the environment and relay it to the user through audio feedback or haptic signals. The key advantage of the glasses format is its ability to capture the user's point of view naturally, providing information about obstacles at all heights, including critically important head-level hazards.

One notable example is OrCam MyEye, a device that attaches to the temple of eyeglasses and uses AI for various functions. The latest version, OrCam MyEye 3 Pro, provides visual and text descriptions along with an AI assistant, all in a wearable device. It can instantly read text from books and screens, recognize faces, and identify products, offering unprecedented access to environmental information. The device is specifically designed for blind and visually impaired users, focusing on supporting greater independence through AI-powered assistance.

Similarly, Envision Glasses integrate with Google Glass and ChatGPT to offer another approach to wearable AI. These glasses can identify objects, read text, and provide valuable information through voice commands. They're particularly beneficial for individuals who have lost their sight later in life and need assistance navigating their surroundings. The technology allows users to simply invoke voice commands to identify and gather information about their environment, enhancing independence and spatial understanding.

The SVG (Smart Vision Glass) represents another breakthrough in this space. This non-invasive, lightweight device mounts on the temple of a spectacle frame and weighs just 53 grams with the frame. It's powered by AI and machine learning algorithms and features a camera with a flashlight, LiDAR sensors, and a braille-coded capacitive operating platform. The device's 'Walking Assistance' function employs LiDAR sensors to detect obstacles and provides audio navigation cues about the distance and nature of obstacles.

Beyond obstacle detection, many of these devices offer additional functionalities that address other challenges faced by visually impaired individuals. For instance, the 'Things Around You' function in SVG assists users in orienting themselves within their environment by identifying objects like laptops, desks, doors, and flowers. It can even identify individuals and offer details such as age and gender when a person is present in the camera's field of view.

Face recognition capabilities add another valuable dimension to these devices. The SVG's 'Face Recognition' function allows users to store the names of family and friends by capturing their picture and verbally recording it with a name. This feature helps overcome one of the most socially challenging aspects of visual impairment—recognizing people in social settings.

Text reading functionality addresses another critical need. OrCam MyEye can read any text from any book and screen, while OrCam Read is specifically designed for individuals with low vision and reading fatigue. These features provide access to printed information that would otherwise require sighted assistance.

The development of these technologies has been rapid, with each new generation offering improved functionality and user experience. For instance, OrCam now offers several versions of its device, including OrCam MyEye 2 Pro, OrCam MyEye 3 Pro, OrCam Read 3, and OrCam Read 5, each tailored to specific user needs and priorities.

Notwithstanding that, challenges remain in the development and adoption of these technologies. One significant barrier is affordability. Most advanced assistive devices remain beyond the financial reach of many potential users, particularly in developing countries where the majority of visually impaired individuals reside. For example, OrCam MyEye technology currently costs approximately INR 210,951.13 (Indian Rupees), making it unaffordable to most potential users in India.

Other devices face similar affordability challenges. NuEyes e2, a wearable electronic magnification device designed for those with low vision, costs around INR 232,046.24. eSight glasses, which use a high-definition camera to project enhanced images onto OLED screens, are reported to cost nearly INR 843,382.61. These high costs significantly limit the availability of these technologies, particularly in low and middle-income countries.

Performance in real-life conditions presents another challenge. Some devices struggle with certain environmental conditions, such as poor lighting or highly reflective surfaces. For instance, AI glasses can misread glossy packaging, tiny fonts, or fast-moving scenes. Additionally, privacy concerns arise with cloud-based features that may transmit images, making policy transparency increasingly important.

User feedback on these technologies has been mixed. In one study on SVG, participants indicated that the device struggled with real-time obstacle detection in crowded environments, and only 38.5% expressed satisfaction with the walking assistance feature. This highlights the ongoing challenge of creating technology that performs reliably in a variety of real-life scenarios.

Despite these challenges, the potential benefits of AI-powered object detection glasses are substantial. By providing information about obstacles at all heights, enabling text reading, facilitating face recognition, and offering environmental descriptions, these devices address many of the limitations of traditional assistive tools. They offer visually impaired individuals greater independence, improved safety, and enhanced interaction with their environment.

A four-week experiment involving 36 visually impaired participants revealed that AI navigation systems improved task efficiency and reduced both cognitive and psychological stress compared to traditional methods. Notably, participants with total blindness outperformed semi-blind participants in task performance, emphasizing the effectiveness of voice-based navigation.

As technology continues to advance and become more affordable, AI-powered object detection glasses hold enormous promise for transforming the lives of visually impaired individuals worldwide. The ongoing development of more accessible, affordable versions of these technologies will be crucial in ensuring that their benefits reach the hundreds of millions of people globally who could benefit from them.

Object detection glasses use AI and computer vision technology to analyze the environment through cameras and sensors. They provide real-time audio or haptic feedback about obstacles, text, faces, and objects in the surroundings, helping visually impaired users navigate more safely and independently.

AI-powered glasses can detect obstacles at all heights, including head-level hazards that white canes miss. They also offer additional features like text reading, face recognition, and object identification, providing richer environmental information and enhancing overall spatial awareness.

Currently, many advanced object detection glasses are expensive, with some models costing over 200,000 Indian Rupees. This high cost makes them unaffordable for many potential users, especially in developing countries where the majority of visually impaired individuals reside.

Visually impaired individuals face numerous challenges, including limited spatial awareness, difficulty detecting overhead obstacles, inability to read signs or recognize faces, and navigating unpredictable environments. These challenges can lead to accidents, reduced independence, and decreased quality of life.

The smart glasses AI market is projected to reach $26 billion by 2030, indicating significant growth potential. Future developments are likely to focus on improving battery life, enhancing processing power, and creating more seamless integrations between human perception and machine intelligence, potentially revolutionizing how we interact with digital information in our daily lives.

Wearable Obstacle Detection Aid

https://www.epj-conferences.org/articles/epjconf/abs/2025/10/epjconf_iemphys2025_01017/epjconf_iemphys2025_01017.html

Assistive Navigation with Sensors

https://iarjset.com/wp-content/uploads/2024/12/IARJSET.2024.111233.pdf

Smart Assistive Device Design

https://www.ijera.com/papers/vol14no7/14075457.pdf

AI Assistive Smart Glasses System

https://www.ijisrt.com/ai-enabled-smart-glasses

Object Detection & Depth Estimation

https://www.researchgate.net/publication/393769195_Smart_Glasses_Portable_Navigation_Aid_for_the_Visually_Impaired_with_Object_Detection_and_Monocular_Depth_Estimation

AI-Powered Assistive Wearables

https://ijsrem.com/download/ai-powered-glasses-for-visually-impaired-people-using-object-detection/

Smart Vision Glass Navigation Functions

https://pmc.ncbi.nlm.nih.gov/articles/PMC12178407/

Smart Assistive Navigation System (voice + object detection)

https://www.scienceopen.com/hosted-document?doi=10.57197%2FJDR-2024-0086

Indoor Navigation Glasses with Audio Guidance

https://www.researchgate.net/publication/389609055_Indoor_Navigation_Glasses_for_the_Visually_Impaired_with_Deep_Learning_and_Audio_Guidance_Using_Google_Coral_Edge_TPU

Wearable Vision Aids Bibliometric Review

https://pmc.ncbi.nlm.nih.gov/articles/PMC11679352/

AI Object Recognition Wearable Vision Assistance

https://arxiv.org/pdf/2412.20059

YOLOv5 Smart Glasses Prototypehttps://ijsra.net/sites/default/files/IJSRA-2024-0804.pdf